TypeScript for quality of web code

In this blog post, I shall discuss the programming language TypeScript as a way to improve the quality of web code. I shall illustrate this with a

Game of Life project on GitHub

It's a simple web application that visualizes

Conway's Game of Life

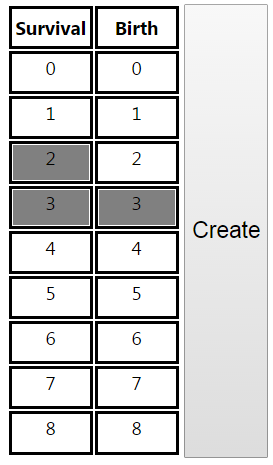

with rules that can be chosen by the user. You can play with the hosted web application by clicking on the image below.

The demo will produce animations as in the two images .

Animation from our TypeScript demo

Animation with alternative rules

Instructions for building this project from source follow later in this post.

TypeScript emerged from Microsoft and is open source. There is increasing support for it, for example in Microsoft Visual Studio, and in the Atom editor. (Visual Studio in particular has its “Intellisense” technology working for TypeScript, as well as some basic refactoring.) Also, Google and Microsoft will apparently write Version 2 of the important AngularJS framework in TypeScript. (There was a link here to a blog post by a Microsoft employee, but the target was removed.)

Moreover, a top computer scientist,

Phil Wadler

has come up with an interesting

research grant about improving TypeScript

(Incidentally, Phil Wadler is from my own academic field, programming language theory, and became a Professor at the School of Informatics of Edinburgh University soon after I left there having finished my PhD.)

Still, this blog post isn’t specifically about TypeScript. TypeScript just acts as a representative of any decent, statically-typed language that compiles into JavaScript.

DisclaimersFirst, I am not a web programmer. (I design business software on the .NET platform.) In this post I consider quality measures that are common for other languages and transfer them to the JavaScript technology stack.

Second, to those who know my academic background: I’m still interested in programming language theory. But I’m untheoretical here on purpose, since I think this topic is best presented as software engineering.

ThanksI would like to thank my employer

Interflex Datensysteme GmbH & Co. KG

for admitting much of this work on our “Innovation Fridays”.

Overview of this post

Prelude: The unique rôle of JavaScript

Ways of maintaining code quality

TypeScript

Test-driven design with TypeScript

Our build process

Prelude: the unique rôle of JavaScript

Remarkably, JavaScript is so far the only programming language that can be run straight away by almost every computer user. (Since we all have web browsers.)

The beginnings of JavaScript are humble compared to the present. Quoting the "JavaScript" entry on Wikipedia:

Initially … many professional programmers denigrated the language because its target audience consisted of Web authors and other such “amateurs”, among other reasons. The advent of Ajax returned JavaScript to the spotlight and brought more professional programming attention. The result was a proliferation of comprehensive frameworks and libraries, improved JavaScript programming practices, and increased usage of JavaScript outside Web browsers, as seen by the proliferation of server-side JavaScript platforms.

One should also mention the ever-faster JavaScript engines, for example the V8 engine of Google’s Chrome browser.

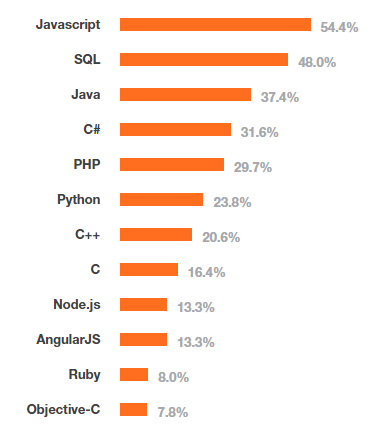

All statistics point in the same direction: JavaScript has become the most used programming language. Even technologies around JavaScript still make it into the charts, like “Node.js” and AngularJS in the 2015 StackOverflow survey:

This blog post focuses on languages that can replace JavaScript as a source language, to improve quality and productivity in larger applications, while staying inside the JavaScript technology stack. So we are talking about web programming, and programming in server-side JavaScript frameworks like “Node.js”.

There are two basic approaches to replacing JavaScript as a source language:

- Avoid JavaScript completely, and make browsers understand some improved language. This is what Google tried with its Dart language.

- Translate some improved language into JavaScript with a source-to-source compiler, a so-called transpiler. One tends to start with a language that is somewhat similar to JavaScript, like CoffeeScript or TypeScript. Often, the JavaScript produced by a transpiler can be easily matched with the source code.

(Incidentally, CoffeeScript is probably the most popular JavaScript replacement, and definitely worth a look. However, it is lacks static types, and thus doesn’t meet my quality demands. For an interesting discussion of TypeScript vs. CoffeeScript, there was a link here. But the target has gone offline.)

Google’s original Dart-approach was good in that it avoided the legacy language JavaScript completely, ultimately resulting in better machine code. But other companies did not adopt Dart, and in mid 2015 Google changed its strategy to compiling Dart into JavaScript! See this

2015 news about the Dart language

(For completeness, it should be mentioned that JavaScript is also increasingly used as a transpiler target for non-web languages like C and C++. For an overview, see the Wikipedia entry on JavaScript. But that’s not the focus of this post.)

Ways of maintaining code quality

Every software engineer who took part in a major, long lived software project knows: it is hard to maintain enough code quality so that the development doesn’t bog down, because of bugs, illegible code, unwieldy integration processes, and a resulting fear of change.

To maintain code quality, software professionals have developed various best practices, a few of which I shall now discuss.

Test-driven design (TDD)

One very important quality measure is test-driven design (TDD): here, one writes unit tests that, when executed, test the behavior of code units. Ideally, all features of all code units are covered by such tests. The current stage of the software (the “build”) is only deemed okay if all test succeed. Unit tests are only used by the developers, and never shipped to the customer.

Static type system

Unit test are one way to catch programming mistakes early. There is another kind of error protection, so obvious that it’s easily overlooked: a static type system. For example, an ill-typed Java program won’t even compile. In some cases, the type error will even be shown in the editor before compilation. Type systems that work by just looking at the code (as opposed to running the code) are called static.

JavaScript has a type system, but it finds errors only when the code is run. Such type systems are called dynamic. Unlike static type systems and unit tests, a dynamic type system on its own is no safeguard against releasing broken software. Because we may never run the code path with the type error before the software is shipped. This argument alone shows that JavaScript’s lack of a static type system is a problem for code quality!

Also, people who code e.g. in C, C++, Java, C# often rely on the static type system without thinking. So it is a grave matter that this safety net of static types, which is standard in large parts of the software industry, is missing from the widely-used JavaScript!

Adding a static type system to JavaScript is the main benefit of TypeScript. More on that soon.

Quality-oriented build process

In the scope of this post, by “build process”, I mean the procedure that does two things: (1) turn the source code (which will be TypeScript + Html + Css) into executable code (which will be JavaScript + HTML + CSS). And (2) run all quality checks, ranging from linters to unit tests. (A linter is yet another kind of static code check, which checks for dubious coding patterns beyond type errors, see the Wikipedia on "lint".)

The build process has clearly a lot to do with quality and productivity. It must be fast, hassle free, and ensure quality. We shall spend some time discussing the build process of our TypeScript demo.

TypeScript

Roughly speaking, TypeScript is a JavaScript dialect with static types. I shall now describe the most important aspects of language. For an even deeper introduction, seetypescriptlang.org

The type system

Basic types

Firstly, TypeScript has some basic types, among them number, boolean, string, and Array.

It is great to have such types, since they rule out mistakes, oversights in particular, that might result in long debugging sessions otherwise.

But it must said that these types have deficits: first, they all allow null values. (In Java, say, strings can also be null, but that’s a questionable aspect of Java.) Worse, values of basic type can also be undefined. With numbers, there are more problems: a value of type number can be Infinity, -Infinity, or NaN (“Not a Number”, behold the irony). Also, TypeScript has no type for integers.

Basically, these basic types of TypeScript are static versions of the corresponding dynamic types of JavaScript. That’s much better than nothing, but not as good as one could hope. It is is worth pondering how TypeScript’s basic types could be improved, but we must leave that to further work, like Phil Wadler’s aforementioned research grant.

For completeness, it should be mentioned that the basic types also include an enum type, an any type, and a void type.

Classes and interfaces

Classes in TypeScript look quite similar to those of Java. Here is an example:

class Person {

private name: string;

private yearOfBirth: number;

constructor(name: string, age: number) {

this.name = name;

this.yearOfBirth = age;

}

getName() { return this.name; }

getAge() { return new Date().getFullYear() - this.yearOfBirth; }

}However, JavaScript’s approach to object-oriented programming is prototype based. Accordingly, the TypeScript transpiler translates the above class into JavaScript by using a well known pattern:

var Person = (function () {

function Person(name, age) {

this.name = name;

this.yearOfBirth = age;

}

Person.prototype.getName = function () {

return this.name;

};

Person.prototype.getAge = function () {

return new Date().getFullYear() - this.yearOfBirth;

};

return Person;

})();TypeScript also knows interfaces, for example:

interface IPerson {

getName(): string;

getAge(): number;

}Now an instance of Person can be used whenever a function expects an IPerson:

function showName(p: IPerson) {

window.alert(p.getName());

}

showName(new Person("John Doe", 42));Note that, unlike in Java, Person need not implement IPerson explicitly for this to work. So we have

structural typing

Of course, a class can extend a parent class:

class Resident extends Person {

private address: string;

constructor(name: string, age: number, address: string) {

super(name, age);

this.address = address;

}

}Here, TypeScript generates the following code, where __extends is defined elsewhere in the output file by a five-liner:

var Resident = (function (_super) {

__extends(Resident, _super);

function Resident(name, age, address) {

_super.call(this, name, age);

this.address = address;

}

return Resident;

})(Person);So the benefits of TypeScript classes and interfaces should be clear now: firstly, they increase readability compared with JavaScript. Secondly, as in other programming languages, classes and interfaces (1) help catch errors during compile time, (2) guide code design, and (3) help different teams negotiate code contracts.

Generic types

Generic types, also called generics, are types that depend on types. Like Java, C#, and many other languages, TypeScript has generics:

On the one hand, there is the build-in type Array<T>, which has as values the arrays whose elements are of type T. So we have Array<number>, Array<string>, and so on. TypeScript also understands the popular notation T[] as a shorthand for Array<T>.

On the other hand, we can define our own generics, as classes or interfaces. For example, our demo project has an interface Seq<T> for arbitrary enumerable collections, not just arrays. Our Seq<T> is in the same spirit as the IEnumerable<T> interface known to C#-programmers. We shall explain Seq<T> more in the next paragraph.

Function types

Here is our aforementioned generic interface Seq<T> for arbitrary enumerable collections. We use it here as a realistic example to motivate function types.

interface Seq<T> {

filter(condition: (element: T) => boolean): Seq<T>;

map<TOut>(transform: (element: T) => TOut): Seq<TOut>;

// some stuff we leave out in this blog post

toArray(): T[];

}The filter() function is meant for transforming a sequence into a shorter one by leaving only those elements that satisfy a given condition. Here, the argument condition of the filter function has the function type (element: T) => boolean. That is,condition is a function that takes a value of the type T and produces a boolean, which decides whether the value should be in the sequence returned by the filter function.

I leave it to the reader to discover the intended meaning of the map function.

Our Seq interface enables very elegant, type safe method chaining, for example

var seq: Seq<number> = ... // instance of some implementing class

seq.filter(elmt => elmt > 42).map(elmt => elmt * 2).toArray();The method chain above starts with some sequence seq, transforms it into a sub-sequence that consists only of the elements greater than 42, multiplies every element of that sub-sequence by two, and transforms the resulting sequence into an array. The terms elmt => elmt > 42 and elmt => elmt * 2 are anonymous functions of type (element: T) => boolean. The latter, for example, is the function that multiplies its input by two.

If you know C#, you have probably figured out by now that our Seq<T> yields an analogue to LINQ.

It should be clear now that function types can be very useful, in particular together with generics.

Incidentally, JavaScript itself provides anonymous functions. But they have a terrible syntax: for example, the JavaScript counterpart of elmt => elmt * 2 is

function (elmt) { return elmnt * 2; }Streamlining the syntax for anonymous functions is a simple, yet very important feature of TypeScript.

This ends our discussion of TypeScript’s type system. There is much more to say – see

typescriptlang.org

Module systems, and using libraries

I shall first illustrate the importance of module systems by describing two problems they solve. Then I shall discuss actual module systems first for JavaScript, and then for TypeScript.

Namespaces

Providing namespaces is an obvious benefit of a module system: in larger projects, there are often multiple functions, classes, or interfaces with the same name. For example, there might be multiple classes called Provider or Parser or Service, or multiple functions called create. Each occurrence of such a class or function will occur in some larger code unit. For example, one code unit for database access, one for business logic, and so on. Any module system worth the name will allow for naming these larger units, for example Database and BusinessLogic, and allow the elements in those units to be addressed by their qualified names, for example Database.Service and BusinessLogic.Service.

Maintainable extensibility

This is a crucial point, which is strangely absent from most discussions of module systems. JavaScript is a great for illustrating this: major web applications tend to comprise many JavaScript files. These files must be loaded into the browser. The old-fashioned way to do this is to include, in the main HTML page, one script tag for each required file. So the main HTML page will contain code like this:

<script src="AjaxUtils.js"></script>

<script src="MathFunctions.js"></script>

<script src="Graphics.js"></script>

// and much moreThis may look harmless at first. But it is a grave problem for medium-sized or large projects: there can be hundreds of required JavaScript files, and they can form a complex dependency tree, for example

- App.html needs App.js

- App.js needs ThirdPartyLibrary.js

- ThirdPartyLibrary.js needs OtherLibrary.js

Now “ThirdPartyLibrary.js” gets an update and replaces “OtherLibrary.js” by some “OtherLibrary2.js”. Then “App.html” would have to be changed to load “OtherLibrary2.js” instead of “OtherLibrary.js” via a script tag, even though the libraries used by “ThirdPartyLibrary.js” should be of no concern to “App.html”! Now imagine a large JavaScript application with many evolving home-brewed and third-party libraries. Then the script tags in “App.html” would become an unmaintainable editing bottleneck!

A module system removes the need to maintain a “central registry” of code units, typically by introducing a syntax of import statements by which each module describes what it needs from other modules. For example, a TypeScript file in our demo starts like this:

import {Array2D} from "Imports/Core/Arrays";

import {RectRenderingContext, Renderer} from "Interfaces";

import {checkDefinedAndNotNull, checkInt} from "Imports/Core/TypeChecks";

export function create(context: RectRenderingContext, pointSize: number): Renderer {

return new RectRenderer(context, pointSize);

}

class RectRenderer<T> { // more code followsHere we import various members (“Array2D”, “RectRenderingContext” and so on) from other modules called “Imports/Core/Arrays”, “Interfaces”, and “Imports/Core/TypeChecks”. These modules are just other files. Our application’s HTML page needs no script tags for these! (By the way, our module also exports a member, which therefore can be imported by other files. By contrast, the class “RectRenderer” in our module is not visible from other modules, since it lacks the export keyword.)

Module systems for JavaScript

Before the ECMAScript 6, which arrived (mostly) in 2015, JavaScript had no module syntax, that is, no syntax for imports and exports.

Besides a module syntax, a JavaScript module system also needs a module loader to ensure that the imported modules are loaded at runtime. JavaScript still has no own module loader.

There are two main standards for JavaScript module systems. Both are from third parties, and both provide a module syntax and a default module loader: (1) CommonJS, whose default module loader comes with “Node.js”, and (2) Asynchronous Module Definition (AMD), whose default module loader is “RequireJS”.

The ECMAScript 6 module syntax is great news: we can now choose that neutral module syntax, and (ideally) swap module loaders underneath. But there is still a catch: at the time of writing, the browsers still do not support the new module syntax. So authors of ECMAScript 6 still need a transpiler that translates the new syntax into, say, CommonJS or AMD. (As we shall see, TypeScript is such a transpiler.)

For a more detailed overview of JavaScript module systems, see this

Post by Dr. Axel Rauschmayer

Module systems for TypeScript

The module situation for TypeScript is like that for JavaScript: we need a module syntax, and a module loader. TypeScript has its own history concerning module syntax; but crucially, it adopted the ECMAScript 6 module syntax starting with TypeScript 1.5! So every TypeScript project should use ECMAScript 6 modules now. (As our demo does.)

The module loaders for TypeScript are the same ones as for JavaScript, since TypeScript is compiled to JavaScript before the code is run.

Here TypeScript adds another great benefit besides types: it can translate ECMAScript 6 module syntax, which is no yet supported by browsers, into old-style module syntax. Specifically, the TypeScript transpiler tsc has a command-line option for the targeted module system. For example, tsc --module amd produces AMD module code. Besides amd the choices include: commonjs, system, and umd. (The list has been growing with new TypeScript versions.) Our demo specifies all tsc command-line options in the “tsconfig.json” file, which is a standard mechanism.

Besides the ECMAScript 6 module syntax, TypeScript also has a notion of “namespaces” for optional use. Unlike TypeScript’s modules, its namespaces do not correspond one-to-one with files: there can be different namespaces within one file, and namespaces that stretch across several files. Our demo does not use namespaces.

Using JavaScript libraries from TypeScript

There are many great libraries written in JavaScript. How do we use them from TypeScript? Luckily, there is a mechanism analog to the way C programs can be compiled against native libraries by using header files: for a JavaScript module, one can create a so-called ambient declaration. This is a TypeScript file that represents the public elements of the JavaScript module, without the implementation. Typically, the file ending is “.d.ts".

Our demo project is well suited for demonstrating this, since it has both a Core library, which contains basics like Array2D<T>, and the actual GameOfLife application. Building the latter does not imply building the former. Instead, GameOfLife imports the JavaScript “binaries” yielded by Core, together with their ambient declarations. For example, Core has the aforementioned class Array2D<T>, in a TypeScript implementation file named “Arrays.ts“. Building Core yields a JavaScript file “Arrays.js“. Since our build of Core uses a command-line switch --declaration, it also emits an ambient declaration file “Arrays.d.ts“:

export declare class Array2D {

height: number;

width: number;

constructor(height: number, width: number, initialValue: T);

set(row: number, column: number, value: T): void;

get(row: number, column: number): T;

}When the build for GameOfLife encounters an import like this

import {Array2D} from "./Imports/Core/Arrays";it will be satisfied with “Arrays.d.ts” instead of “Arrays.ts“. However, we must ensure that the file “Arrays.js” is found during run time.

TypeScript’s ambient declaration syntax goes way beyond our Array2D example. For more, see

typescriptlang.org

Crucially, there is a repository containing TypeScript ambient declarations for a great number of libraries. That repository is called

DefinitelyTyped

Test-driven design with TypeScript

Test-driven design involves writing tests for code units, module by module, class by class, and function by function. Each test, when run, can succeed or fail. The tests should cover as much functionality as possible. If tests fail, the software cannot be released.

Overview of test frameworks

Many programming languages have third-party test frameworks, that is, libraries with primitives for writing unit tests. Such primitives are mostly assertions, for example for checking that two values are equal. In contrast to a mere assertion library, a test framework has also ways to mark a class or a function as a test.

A well known Java test framework is JUnit. For Microsoft’s .Net framework, there is for example the Microsoft Unit Test Framework, or the third-party framework NUnit.

JavaScript too has unit test frameworks, among them Jasmine, QUnit, and Mocha. There is also the assertion library Chai.

Jasmine, QUnit, Mocha, and Chai are also available in TypeScript, because they are covered by the aforementioned DefinitelyTyped repository! We see here how elegantly TypeScript continues to use JavaScript technology.

An example unit test file

Our demo happens to use the QUnit test framework, since QUnit has a wide adoption and I like its style. I shall now discuss an example of unit tests, namely the file “Sequences_ArraySeq_test.ts” from our demo. Don’t read the file just yet, since it’s best to start the discussion in the middle.

import {createArraySeq, Seq} from "Sequences";

import {assertDefinedAndNotNull} from "TypeAssertions";

var seq: Seq<number>; // "var" because otherwise R# makes a type inference error

let functionName: string;

let name = (testCaseName: string) => "ArraySeq, " + functionName + ": " + testCaseName;

QUnit.testStart(() => {

seq = createArraySeq([0, 1, 2, 3]);

});

functionName = "constructor";

test(name("Argument is defined"), () => {

assertDefinedAndNotNull("seq", createArraySeq)();

});

functionName = "filter";

test(name("Argument is defined"), () => {

assertDefinedAndNotNull("condition", seq.filter)();

});

test(name("function works"), () => {

const result = seq.filter(n => [1, 3].indexOf(n) >= 0).toArray();

deepEqual(result, [1, 3]);

});

// ... more test hereOur test file uses three kinds of elements from QUnit. First, the function test that declares a single test. Second, the function deepEquals for comparing two values including nested properties. Third, the function testStart, for declaring code that runs before each test. QUnit has several other features not used in our test file, but our file contains enough QUnit to convey the spirit of that framework.

The test function of QUnit takes two arguments: the name of the test, and the body of the test, whose execution is delayed by an anonymous function. The name function is provided by me for convenience, to help us group our tests: first the name of the tested class, then the name of the tested function, and finally the test case.

The most straightforward test is probably this one:

test(name("function works"), () => {

const result = seq.filter(n => [1, 3].indexOf(n) >= 0).toArray();

deepEqual(result, [1, 3]);

});We are testing the filter function of our ArraySeq class here. Our ArraySeq was created with the values 0, 1, 2, 3 in the testStart function. We apply our filter function with a predicate that yields true only for 1 and 3. Finally we check that the result of the filtering, when turned into an array, is equal to [1, 3].

Then there are tests like this:

test(name("Argument is defined"), () => {

assertDefinedAndNotNull("condition", seq.filter)();

});This tests checks that our filter function throws an ArgumentException on an argument which is null or undefined. Similar checks also occur in Java or C# unit tests, but only for null, since Java and C# have no undefined. Our ArgumentException here is a class defined in the module Exceptions of our Core project.

Since many unit tests check how a testee reacts to bad arguments, I provided an assertion library, TypeAssertions, which contains the above assertDefinedAndNotNull and some other assertions.

There is also a production-code library TypeChecks that complements TypeAssertions. For example, TypeChecks contains a function

checkDefinedAndNotNull(argumentName: string, value: any)That function, when put in the first line of another function f, will throw an ArgumentException for argumentName if value is null or undefined.

Unit tests for gaps in the type system

Types that admit null values are problematic. The first person to admit this is inventor of null references, the famous computer scientist

Tony Hoare

Speaking at a conference in 2009, he apologized for inventing the null reference.

Video of Tony Hoare speaking about null references

I call it my billion-dollar mistake. It was the invention of the null reference in 1965. At that time, I was designing the first comprehensive type system for references in an object oriented language (ALGOL W). My goal was to ensure that all use of references should be absolutely safe, with checking performed automatically by the compiler. But I couldn’t resist the temptation to put in a null reference, simply because it was so easy to implement. This has led to innumerable errors, vulnerabilities, and system crashes, which have probably caused a billion dollars of pain and damage in the last forty years.

JavaScript makes things worse by allowing undefined besides null. Sadly, this carries over to TypeScript, which has a static type undefined.

Worse, JavaScript and TypeScript have no integer type, only number. Not only can a value of type number be an non-integer number; it can also be null, undefined, Infinity, -Infinity, or NaN.

Now imagine we have a TypeScript project, and we want to ensure the same code quality as in Java, for example. Whenever a TypeScript function is meant for an integer argument, the type system can only ensure the argument is a number. So we are forced to add run-time checks that the argument is integer! (Our demo has such checks, using the checkInt method from our TypeChecks module.) Worse, for each argument intended to be integer, we need an extra unit test to check that an exception is thrown for a non-integer! (Our demo has such tests, using the assertInt method from our TypeAssertions module.)

So we see, in quantitative way, how gaps in a type system force us into more coding. And the type system of TypeScript has gaps.

Still, having static types at all is very helpful, since without them we would need even more run-time checks and unit tests! And we can address the remaining gaps with libraries containing run-time checks, plus libraries containing test assertions.

Our build process

“Serious” build tools: not used here

A build process turns source code into deployable code, and runs quality checks. Build tools abound, and vary strongly between platforms. One example is the make command from the Unix world. Another is Microsoft’s MSBuild. The JavaScript world has several alternative build tools. I tried Gulp and Grunt In our case these must call special-purpose tools, like the TypeScript transpiler tsc or the test runner Karma. This happens via plugins, for example grunt-typescript and grunt-karma. Typically, all components (e.g. Grunt, tsc, grunt-typescript, Karma, and grunt-karma, and many more) are taken from the standard repository npm of “Node.js”! (More about npm later.) And all components are versioned! I found that this leads to a jungle of versioned dependencies which is unpleasant to maintain and upgrade. Plus, most plugins require an own configuration section in the build tool’s configuration file (e.g. the file “grunt.config”), which becomes bloated quickly. When faced with this, I found blog post by Keith Cirkel(?) that focussed on this issue, but that post is no longer available now. Following that post, I migrated to a much simpler build process, which I shall now describe.

“Node.js” as a build platform

“Node.js” is established as a server-side environment for JavaScript. It has its own package manager, npm (Node Package Manager), which points to the standard NPM repository. All tools we need reside in the NPM repository: the TypeScript transpiler tsc, our test runner Karma, the linter ESLint, the module loader RequireJS, the unit test framework QUnit, and more.

“Node.js” can be (easily) installed on various operating systems, including Windows, Mac OS, and Linux. So with “Node.js” we can define a build process that does not depend on the underlying operating system!

Our projects “Core” and “GameOfLife” each have a file “package.json” that lists all tools needed for that project.

The “package.json” file

Here is the “package.json” of our Core project:

{

"name": "Core",

"version": "0.0.0",

"description": "All dependencies of the Core project ",

"main": "index.js",

"dependencies": {},

"devDependencies": {

"eslint": "2.9.0",

"karma": "0.13.22",

"karma-chrome-launcher": "0.2.0",

"karma-firefox-launcher": "0.1.6",

"karma-ie-launcher": "0.2.0",

"karma-junit-reporter": "0.3.3",

"karma-phantomjs-launcher-nonet": "0.1.3",

"karma-qunit": "0.1.5",

"karma-requirejs": "0.2.2",

"qunitjs": "1.18.0",

"requirejs": "2.1.19",

"tslint": "3.8.0",

"typescript": "1.8.10"

},

"scripts": {

"build": "eslint *.js && tslint *.ts && tsc && karma start",

"quickBuild": "tslint *.ts && tsc && karma start"

},

"repository": {

"type": "git",

"url": "https://github.com/cfuehrmann/GameOfLife.git"

},

"keywords": [

"key1",

"key2"

],

"author": "Carsten Führmann",

"license": "LicenseToDo",

"bugs": {

"url": "https://github.com/cfuehrmann/GameOfLife/issues"

},

"homepage": "https://github.com/cfuehrmann/GameOfLife"

}(The “package.json” of “GameOfLife” is almost the same. The reason why I have two “package.json” files, instead of one for both projects, is: I intend to split the projects in two GitHub repositories, since “Core” is to be used by other projects besides “GameOfLife”.)

Let’s discuss our “package.json” file. The devDependencies section lists the tools we need. Most importantly,

typescript, the TypeScript transpilerkarma, our test runnertslint, a TypeScript linter

There are some other packages, among them plugins that enable Karma to use other packages: karma-chrome-launcher, karma-qunit, and so on.

The meaning of the scripts section will become clear soon.

Versioned installation of all tools with a single command!

Here is a great benefit of having all tools run under “Node.js”: when we run

npm installfrom the command prompt, from inside the Core directory, all packages described in “package.json” are automatically installed from the npm repository on the web! (That might take a minute or two.) The packages are put in a subdirectory “node_modules” of the Core project. Similarly for the GameOfLife project.

Because our “package.json” file contains the package versions, it defines our tools completely and precisely!

Take a minute to ponder the elegance of this “Node.js” setup:

- Every programmer on Windows, Linux, and Mac OS can pull our project from GitHub and build it.

- The only prerequisite is “Node.js” and npm.

- All build tools, in the correct version, can be installed with a single command.

- But the build tools don’t clog our GitHub repository – they come directly from the NPM repository.

Local vs. global installation of npm packages

The npm install command described above, when run from e.g. the Core directory, installs the packages locally in that directory. This implies for example that we can not open a command prompt and run the TypeScript transpiler by entering tsc , even though we installed that npm package. We could run tsc in this simple way if we had installed tsc globally, which is also possible with npm.

Indeed, many JavaScript projects require globally installed tools. I decided against that. Thus, I view the tools as an integral part of the project. This has a advantages: (1) Different projects on a developer’s machine can use different versions of the same tool. (2) Even if the developer has only one project on their machine, our setup rules out that the wrong version of a tool is used.

Now we are ready for an explanation of the scripts section in our “package.json” file. We have seen that we can’t simply run our tools from the command line; but npm knows how to do that! For example, the command tsc is unknown at the command prompt, but it can still be run by npm tsc entered from the Core directory. Then npm runs the tsc version installed in the local directory. The elements in the scripts section of the “package.json” file are aliases for commands to be run via npm. For example,

npm run buildtranslates into

eslint *.js && tslint *.ts && tsc && karma startusing the local eslint,tslint, tsc, and karma.

The definition of our build

As we have just seen, our build just consists of four steps separated by a && operator:

eslint *.js && tslint *.ts && tsc && karma startLuckily, the && operator exists on Windows and Linux. An expression command1 && command2 means: execute command1 first, and only if that succeeds, execute command2 .

So our build first runs the JavaScript linter eslint on our JavaScript (not TypeScript!) files. Currently, our only JavaScript files are certain configuration files for Karma. It’s still good to check those, and in the future we may need more JavaScript files even though our project consists essentially of TypeScript.

If eslint succeeds, the TypeScript linter tslint is run on our TypeScript files.

If tslint succeeds, the TypeScript transpiler tsc is run. The parameters of tsc, including its input files, are given in an extra file, “tsconfig.json”.

It tsc succeeds, the test runner karma is run. It is configured by the file “karma.conf.js”.

Admittedly, our build is not incremental, and incapable of parallelism. A build tool like Grunt would enable such missing features. But, as discussed above, at the cost of many extra dependencies and way more configuration. In practice, our minimalistic build works as well as we could wish.

The BuildOutput directories

This is a detail that needs mentioning: our Core project and our GameOfLife project each have their own BuildOutput directory.

The BuildOutput directory of Core contains:

- All JavaScript files that result from transpiling Core.

- All ambient declaration files that result from transpiling Core. (As explained in the modules section, these are needed so that other TypeScript code can use Core.)

The BuildOutput directory of GameOfLife contains:

- All JavaScript files that result from transpiling GameOfLife.

- The JavaScript files imported from Core.

- The JavaScript files imported from third parties, here just “require.js”.

- Our HTML files and CSS files

The key feature of the BuildOutput directory of GameOfLife is that it can be used straight away for hosting. For example, the

Hosted GameOfLife application

(For technical reasons, both BuildOutput directories also contain the JavaScript files that result from transpiling our unit tests. I am considering to change that.)

Open issues

Unit test frequently use mocking: the creation of replacement objects during a unit test, to check how they are used by the production code. For Java and .Net, for example, there are third-party mocking frameworks. Our GameOfLife demo didn’t compel me to use mocking, but mocking in TypeScript should be straightforward: for example, there is the mocking library typemoq. One should try this. (It is available via npm, like all our tools.)

Here is another gap in our investigation: as mentioned in the modules sections, frameworks and libraries must be usable from TypeScript, via ambient declarations. Our demo uses QUnit in this way. But there are

many frameworks usabe from Typescript

In particular, there are frameworks for graphical user interfaces, that one may want to use from TypeScript. I have tried no such framework so far. This should usually work, since DefinitelyTyped has ambient declarations for many such frameworks. Still, when starting a TypeScript project, one should check if the frameworks one plans to use have well-maintained ambient declarations.